PROJECT SUMMARY

INDUSTRY

Retail

Data science

COLLABORATION

Outstaffing engagement

CHALLENGE

No real-time ML recommendation system

Inefficient Spark streaming implementation

Databricks jobs required optimization

Self-hosted Airflow migration needed

TECH STACK

RESULTS

30x

AWS costs cut

30%

Databricks cost savings

4

years of ongoing collaboration

We provided specialized DevOps expertise to a data science team working with a top-100 US fashion and apparel company. The end client operates a flash sales and e-commerce platform serving 4 million monthly users, focusing on exclusive sales events and limited-time offers.

The data science team contracted our services to help implement real-time recommendation capabilities while managing AWS infrastructure costs. Their existing approach used Apache Spark structured streaming, which resulted in operational expenses of several thousand dollars per month.

Their team required specialized expertise to build scalable, cost-effective solutions for real-time data processing and machine learning inference. We joined the effort to help the client rethink their real-time architecture and work through these challenges step by step

Outstaffing support and development process

We supported the six-person data science team through outstaffing. Our Python-proficient DevOps architect collaborated directly with their team to design and implement AWS infrastructure improvements.

The engagement started with an initial commitment of six months, which has since been extended into the third term.

Our goal was to address legacy code maintenance while building new infrastructure on top.

Real-time personalized predictions

First, we replaced the client’s Apache Spark structured streaming POC with a serverless architecture using AWS Lambda functions:

- Built a pipeline of AWS Lambda functions to process real-time data from web and mobile applications

- Created a rolling window mechanism for each user to collect event batches for inference

- Deployed the ML model to AWS Sagemaker real-time inference endpoints

- Developed a custom Docker image to support the client’s ML model, which Sagemaker doesn’t natively support

- Stored inference results in DynamoDB tables

- Exposed results through AWS API Gateway for consumer applications

- Set up CloudWatch health monitors, alerts, and dashboards for service monitoring

Dynamic real-time activity trend computation

Next, we built a separate service to calculate activity trends for products and product collections in real time:

- Created a pipeline of AWS Lambda functions to process event data

- Used DynamoDB as the persistent data store

- Implemented DynamoDB CDC (Change Data Capture) streams for cost-effective data processing

- Built a rolling window function for data aggregation

- Added variable time width to account for differences in day and night user activity patterns

- Deployed results via AWS API Gateway and Lambda functions

The service was designed to handle a sustained load of 500–700 requests per second, ensuring stable performance without critical overloads. Additionally, the processing tasks were required to be completed within strict business-driven deadlines to support timely decision-making.

Apache Airflow migration

The migration process involved transferring the client’s self-hosted Airflow instance to AWS MWAA (Managed Workflows for Apache Airflow). Our team moved DAGs code from the legacy version to the latest MWAA-supported version while refactoring it to align with Airflow best practices. Workflow restructuring reduced database load, and code optimization resulted in improved service stability.

Databricks jobs optimization

Finally, we optimized the most expensive Spark jobs running on the Databricks platform:

- Analyzed job execution patterns and resource usage to identify optimization opportunities

- Split jobs into parallel tasks to improve cluster resource utilization

- Restructured data processing graphs to eliminate redundant operations

- Optimized job queues and transformation paths to streamline processing

- Unified filtering and field selection to remove duplication

- Implemented synchronous and asynchronous query execution based on context

- Redesigned and enhanced caching strategies

- Applied clustering techniques to improve data organization and query performance

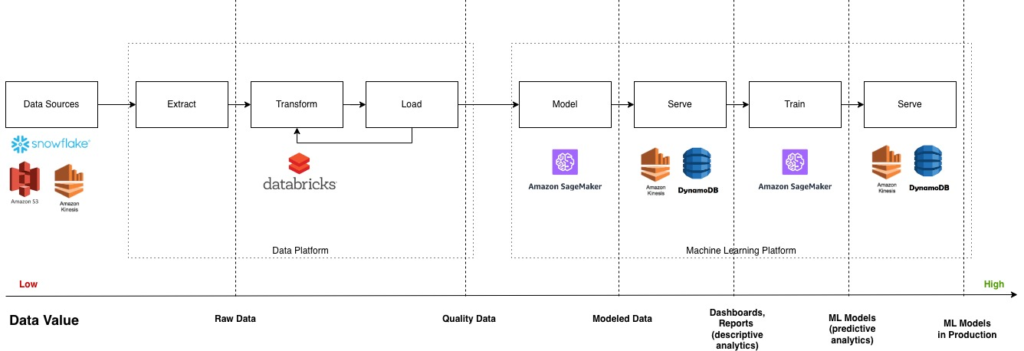

Data value chain

The data value chain encapsulates the journey of data from raw input to actionable business insights. It includes stages such as data collection, processing, modeling, and deployment, each adding value and enabling informed decision-making.

This case study demonstrates how we applied this model to transform the client’s data infrastructure and optimize real-time analytics. The implemented solutions at each stage – from data extraction and transformation using Databricks to machine learning model deployment with SageMaker – work together to maximize data value and operational efficiency.

The following diagram illustrates this flow and highlights the increasing value of data as it progresses through the system.

- Extract: Data is collected from multiple sources, including Snowflake, Amazon S3, and other storage systems.

- Transform: Databricks processes and aggregates the raw data, converting it into quality data suitable for modeling.

- Load: Transformed data is loaded back into the data platform for further use.

- Model: Machine learning models are developed and deployed using Amazon SageMaker.

- Serve: Inference results are served through AWS services such as Kinesis and DynamoDB, enabling real-time predictions.

- Train: Models are continuously retrained in SageMaker to improve accuracy.

- Serve (Production): Updated models are deployed to production environments, delivering predictive analytics to end users.

This structured flow ensures data quality improves at each stage, increasing its value from raw input to production-ready machine learning models. The architecture supports real-time analytics and scalable deployment across the client’s platform.

CI/CD implementation

We established a standardized development workflow for the client’s data science team to address challenges related to code management, deployment consistency, and collaboration efficiency.

Before, the team faced difficulties with managing a monolithic codebase, coordinating changes across multiple contributors, and ensuring reliable deployments without manual errors.

Implementing CI/CD introduced automated testing, code reviews, and streamlined deployment pipelines, which significantly reduced integration issues and deployment times. This approach enabled faster delivery of new features and bug fixes while maintaining high code quality and system stability:

- Split code from the mono-repository into separate repositories

- Created naming conventions and structure guidelines for new repositories and services

- Implemented branch-based development workflow with pull request reviews

- Set up automated testing in test environments

- Configured automatic deployment to production after pull request approval

Before implementing CI/CD, deployments occurred approximately once per year. With the new processes in place, deployment frequency increased to about four times per year, enabling more agile and reliable software delivery.

Let’s solve your technical challenges.

Book a free consultation to discuss practical solutions.

We’ll get back to you within 1 business day to suggest possible next steps.

Collaboration approach

The client’s team conducts daily standups and code reviews internally. We maintain alignment through weekly check-ins and additional contact as needed – daily communication happens directly with the client’s team lead. This provides clear channels for task assignments and clarifications.

Project decisions are made collaboratively. As the client recognized our expertise, they became more receptive to our recommended solutions while we maintained focus on their business priorities.

Tech stack

We built the solution using AWS services and data platforms that support real-time processing and cost-efficient operations. The architecture combines serverless components for event processing with managed services for data storage and ML model deployment.

Technology

Description

Purpose

Analytical service for processing big data (compatible with Apache Spark code).

Facilitating seamless aggregation and processing of large-scale data.

Providing a robust repository for storing and managing datasets. |

Empowering the creation, training, and deployment of accurate machine learning models for predictive inferences.

Enabling efficient collection, processing, and analysis of real-time data streams with scalability.

Results

We reduced the client’s AWS operational expenses from several thousand dollars per month to $80 per month, a reduction of over 30 times.

The client gained two new capabilities:

- Personalized real-time forecasting

The recommendation service delivers tailored product recommendations to customers in real-time based on their browsing and purchase behavior.

- Dynamic activity trend analysis

The service calculates activity trends for products and product collections, providing the data science team with insights for decision-making.

Beyond cost savings, we improved the overall infrastructure stability and development workflow. Databricks job costs decreased by 30% for optimized jobs, while the Airflow migration to MWAA and code refactoring eliminated stability issues.

We established repeatable CI/CD processes that enabled the data science team to deploy changes faster and more reliably.

Conclusion

The project with the client has spanned nearly four years and remains ongoing, focusing on continuous development rather than just support. Throughout this time, we have delivered significant improvements in real-time data processing, cost optimization, and infrastructure stability.

The collaboration has been marked by a strong partnership and mutual trust, exemplified by the client’s feedback: “Can we please clone your DevOps engineer?”

Need a tailored solution to optimize your data science operations and reduce infrastructure costs? Contact us today to discuss how we can help you achieve scalable, efficient, and reliable real-time analytics tailored to your business needs.

Chapters

- Outstaffing support and development process

- Real-time personalized predictions

- Dynamic real-time activity trend computation

- Apache Airflow migration

- Databricks jobs optimization

- Data value chain

- CI/CD implementation

- Collaboration approach

- Tech stack

- Results

- Conclusion