Info Setronica

As an experiment, one of our frontend developers set out to build a working MVP of an AI agents marketplace from scratch – in 2 weeks and roughly 40 hours of part-time work.

The goal wasn’t to ship a full product but to see how far a small team (or even a single developer) can go using modern tools and AI pair programming.

This article breaks down that process – what worked, what didn’t, and where AI actually made a difference.

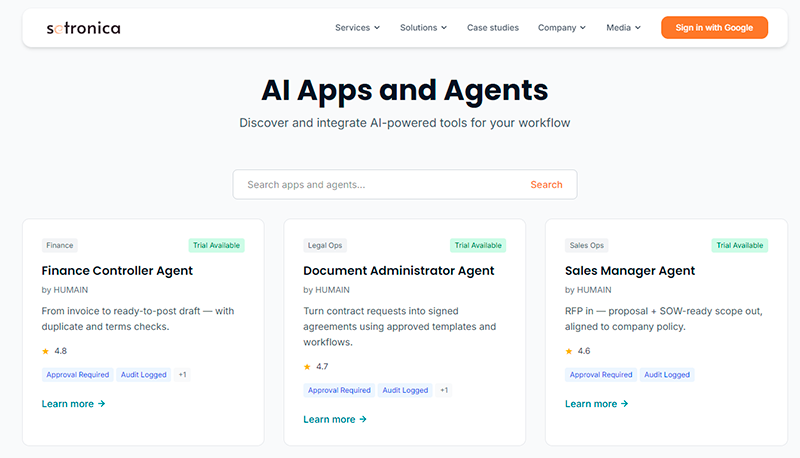

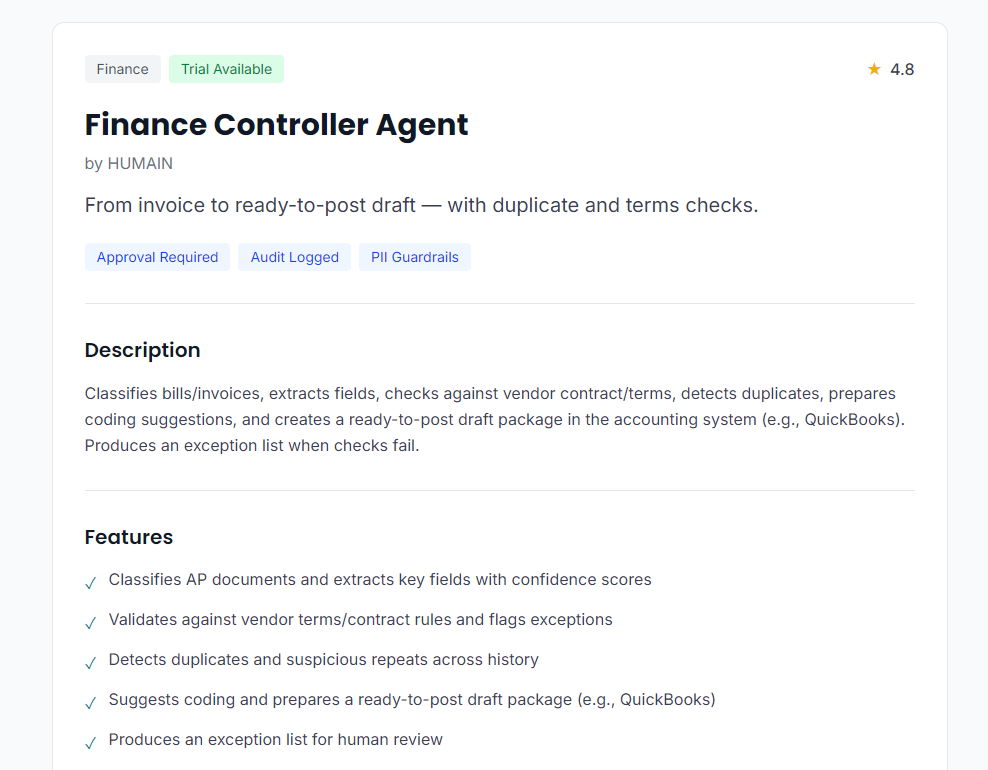

At its core, the product is a simple marketplace for AI agents. In this context, an “AI agent” is a predefined AI setup designed to perform a specific task. It typically includes a system prompt, a set of tools, and constraints that make it more reliable for a narrow use case.

For this experiment, the focus was on the catalog layer rather than the agents themselves.

The MVP includes:

From a technical perspective, this is not a full platform yet. Instead of a traditional database, the catalog is stored in a static JSON file. There is no admin panel or CMS at this stage.

The system is designed to be extended later, but the current version is intentionally minimal.

It’s also worth noting that the product side is still loosely defined. The focus here was on testing the approach and building a functional foundation rather than validating a specific business model.

The project started with a small but clearly defined scope: a catalog of five agents, basic search, and authentication.

Instead of designing everything upfront, the approach was to get a working version as quickly as possible and iterate from there.

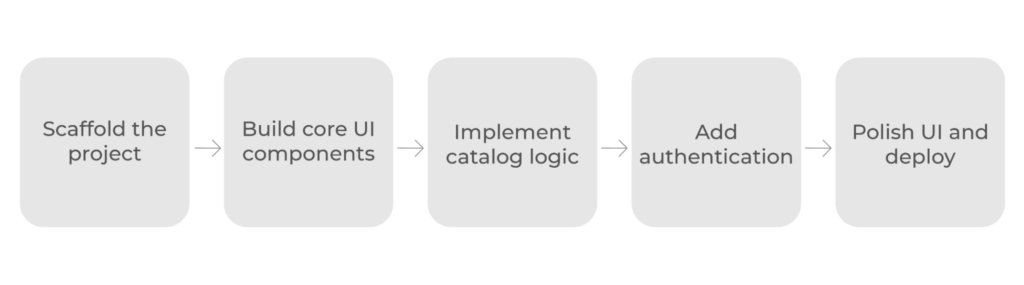

The process followed a simple sequence:

Several things were intentionally left out:

Every decision was guided by a simple question: does this help us reach a working MVP faster?

Before getting into implementation details, it’s worth looking at a few key decisions that shaped the project. At this stage, the goal wasn’t to find the “perfect” architecture but to choose tools and approaches that would allow moving quickly without blocking future changes.

The project uses a fairly standard stack: Next.js with App Router and Server Components, Tailwind CSS, TypeScript, and NextAuth for authentication.

The main reason for this choice was speed. Next.js provides routing, server-side rendering, and SEO capabilities out of the box, which removes a lot of setup work. Tailwind helps build interfaces quickly without designing a system from scratch, while TypeScript adds a layer of safety without significantly slowing development.

At the same time, this setup is not the simplest possible option. For a five-page MVP, Next.js can be seen as overkill. A lighter framework or even a static setup could have worked. However, in this case, the trade-off was intentional: slightly more complexity upfront in exchange for flexibility later and an opportunity to work hands-on with a widely used stack.

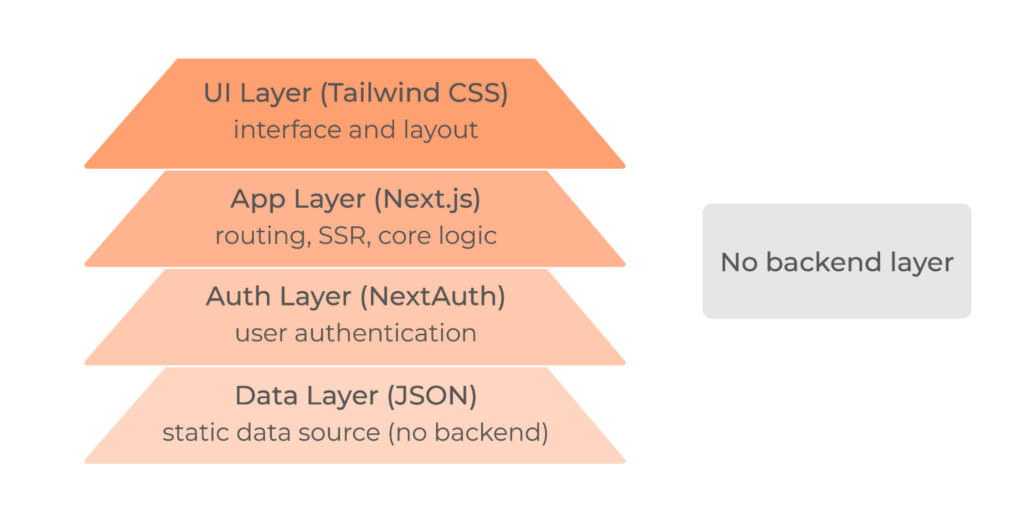

The architecture was kept intentionally simple.

Instead of introducing a database early on, the catalog is stored in a static JSON file. It removes the need for backend infrastructure and keeps the system easy to understand and modify. At the same time, the codebase is structured in a way that allows replacing the JSON layer with a real database later without major refactoring.

One of the more important decisions was how to handle search and filtering.

Rather than relying on client-side state, all parameters are stored directly in the URL. This has a noticeable impact on how the application behaves. Pages remain fully server-rendered, which simplifies the data flow and avoids synchronization issues between client and server.

It also improves usability in subtle ways. Users can share filtered results via a link, refresh the page without losing state, and use browser navigation naturally. These are small details individually, but together they make the application feel more predictable and robust.

Styling went through a noticeable evolution during the project.

It started with a pure Tailwind approach, which is a natural choice when speed is a priority. Tailwind makes it easy to build layouts quickly, and its utility-first model reduces the need to jump between files.

However, as the interface grew, some drawbacks became more visible. Long chains of utility classes made JSX harder to read, and maintaining consistency across components required more discipline than expected. On top of that, there was a learning curve associated with getting comfortable with Tailwind’s conventions.

Things changed further when a corporate brandbook was introduced. At that point, the project needed a more structured way to handle design tokens such as colors, typography, and spacing.

Instead of abandoning Tailwind, the project shifted to a hybrid model. Tailwind remained useful for layout and spacing, but CSS custom properties were introduced as a source of truth for design tokens. For more complex components, BEM-style classes were used to keep styles readable and maintainable.

In practice, this combination turned out to be more flexible than trying to enforce a single styling approach across the entire project.

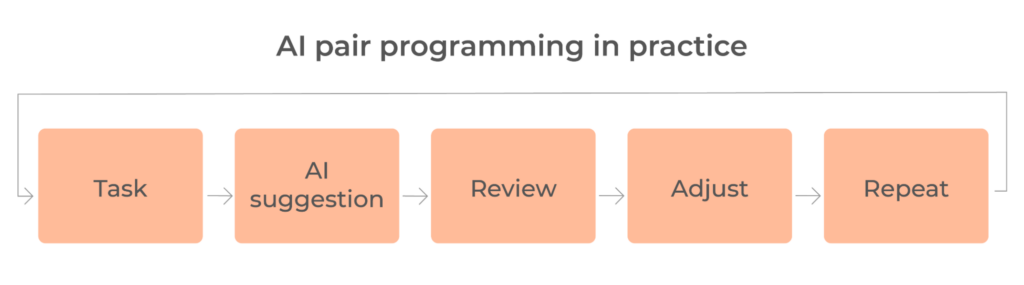

A significant part of this project was built using AI pair programming, but in practice, this was less about automation and more about a different way of working.

The process was iterative. A task would start with a short description – what needs to be built, how it should behave, and any constraints. Based on that, the AI would suggest an approach or generate an initial implementation. From there, the solution was reviewed, adjusted, and often partially rewritten.

This back-and-forth turned out to be more important than the initial output. The quality of the result depended heavily on how clearly the problem was defined. Vague requests usually led to generic or suboptimal solutions, while well-scoped tasks produced much more usable results.

One of the less obvious benefits was how effective AI was as a learning tool. Working with a new stack usually involves a lot of context switching, but here much of that process happened inline – with explanations and examples directly in the code. This didn’t replace learning, but it made getting up to speed noticeably faster.

Over time, AI was also used to explore unfamiliar parts of the stack – particularly Next.js, Server Components, and Tailwind – not just by generating code, but by explaining why certain approaches work better than others.

In some cases, it even influenced technical decisions. For example, it suggested storing filters in the URL instead of local state and helped avoid premature abstractions. These were not always obvious choices upfront, but they proved to be more practical in the context of an MVP.

The most noticeable impact was speed, especially when working with technologies that were not part of the developer’s primary stack.

Coming from an Angular background, adapting to React and Next.js would normally require a significant ramp-up. In this case, AI reduced that overhead. Instead of switching between documentation and implementation, both happened in parallel.

It also helped streamline routine work. Generating components, connecting basic logic, and setting up configuration files took significantly less time. These are not complex tasks, but they tend to accumulate, and reducing that overhead made the overall process smoother.

At the same time, the output could not be used blindly. AI-generated code consistently required review. Most issues were relatively minor – layout inconsistencies, missing styles, or small UI edge cases – but they were frequent enough to make manual validation necessary.

More importantly, AI does not remove the need for architectural thinking. It can suggest approaches and compare options, but it does not have full context of the project. Final decisions still depend on the developer, especially when it comes to trade-offs.

AI worked best as an assistant, not as an autonomous builder. It improved speed and reduced friction, but the direction of the project still depended on the developer.

The most time-consuming part of the project turned out to be frontend work, particularly styling.

This was partly due to working with a less familiar stack and getting used to Tailwind at the same time. Even relatively simple layout tasks required more iterations than expected.

There were also several technical challenges that added friction along the way. Pagination, for example, required multiple attempts to get both alignment and behavior right. The testing setup introduced its own issues, especially when trying to mock filesystem access. Docker configuration caused a few unexpected build-time errors related to environment variables.

None of these issues were critical, but together they slowed down progress and required constant context switching.

Looking back, a few decisions can be clearly categorized as unnecessary for this stage.

One example is the attempt to move navigation into a separate JSON configuration. The idea was to allow non-developers to update it without triggering a deployment. In practice, this added an extra layer of complexity without solving a real problem, so the change was eventually rolled back.

A similar situation happened with Figma exports. Automating the process seemed like a good investment, but it quickly ran into practical limitations such as API rate limits and parsing issues. For the scope of this project, manual export turned out to be the more efficient option.

There were also moments where more complex architectural changes were considered, especially around styling. These ideas were not wrong, but they were not necessary at the MVP stage and would have slowed things down.

Overall, the pattern is fairly typical: many reasonable ideas become counterproductive when the main goal is speed.

After 2 weeks, the result is a working MVP that covers the core use case without unnecessary complexity.

At its center is a catalog of AI agents with search, filtering, and pagination. Each agent has its own page with basic SEO setup, and users can authenticate via Google. The interface is responsive and aligned with a brandbook, while analytics provide a minimal level of visibility into usage.

From an engineering perspective, the project also includes a test suite with over 30 tests, a CI/CD pipeline, and a Docker-based setup for consistent deployment.

Overall, the system is functional and ready to be extended. More importantly, it provides a foundation that can be extended without major restructuring.

As expected for an MVP, several important aspects are not yet implemented.

Error handling is minimal, and environment configuration lacks proper validation. Search is limited to basic functionality and does not include full-text capabilities or advanced sorting. Accessibility has not been fully addressed.

On a structural level, the system still relies on a static data source rather than a real backend or database, and there are no admin tools or CMS for managing content.

All of these were consciously deprioritized. The goal at this stage was to reach a working version quickly while keeping the door open for future improvements.

This experiment shows how far a small team – or even a single developer – can go with a limited scope, modern tools, and AI support.

The result is not a finished product but a functional foundation.

AI played an important role in speeding up development and reducing friction, especially when working with a new stack. At the same time, it remains a tool that depends heavily on clear requirements and human oversight.

The next step is to understand how well this approach scales beyond the MVP stage.