Info Setronica

“AI is now writing code, and I’m acting as its tamer, filtering through neural network opinions to find one effective implementation.”

This observation captures a fundamental shift happening in software development. The bottleneck is no longer typing code – it’s articulating what needs to be built.

To understand how teams are adapting, we talked with a senior enterprise architect specializing in domain-driven design and a frontend developer working extensively with AI tools. We also surveyed developers at Setronica about their experiences with specifications and AI-assisted development.

This guide shows you how specification-driven development works in practice, when it helps, when it doesn’t, and how to implement it effectively in your team.

In traditional development, you write code first and maybe document it later. The code is the truth, and documentation (if it exists) is often outdated.

In spec-driven development, or SDD, the specification is formalized first using standards like OpenAPI, Protocol Buffers, or GraphQL schemas. The code implements this specification, and tooling validates that implementation matches the contract. Documentation is generated automatically from the specification and stays current because the spec is what drives development.

“Think API-first: you define behavior first, then build implementation. The specification becomes your single source of truth. When requirements change, you update the specification first, then regenerate or update the implementation accordingly.”

As AI accelerates coding, teams are realizing that clear, upfront specifications are key to keeping projects on track. This section explores why specs have become more important than ever.

AI shifts complexity from writing code to defining clear requirements and system design. As a result, breaking down tasks and understanding the entire system matters more than focusing on individual code components. Result verification becomes more important than result creation.

When code generation accelerates, problem formulation becomes the bottleneck. You can generate code faster than ever, but you can’t formulate problems faster. The skill of clear specification writing simply grows in value.

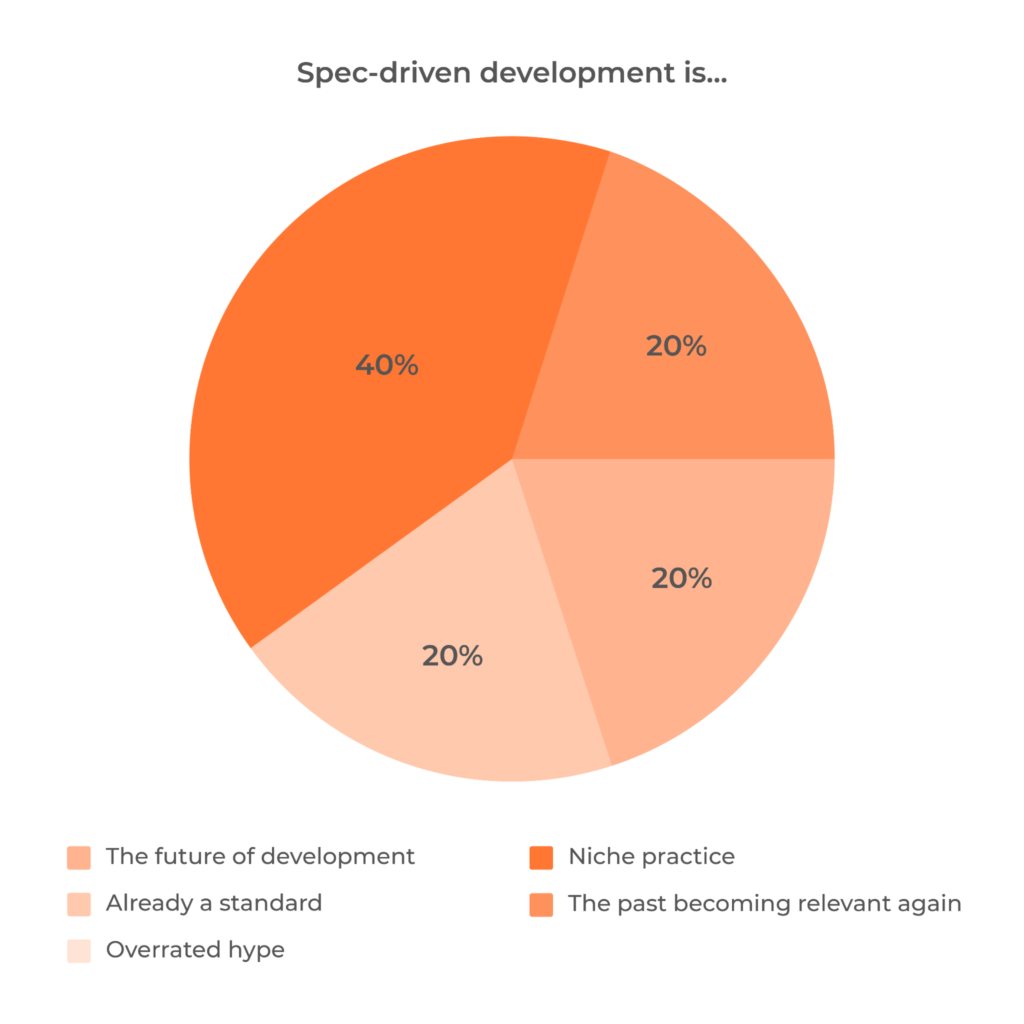

In a recent survey of developers at Setronica, all respondents agreed that AI output quality depends entirely on specification quality. This isn’t a minor correlation – it’s a complete dependency.

Without a clear specification, AI development turns into chaos of constant edits. You’re continuously redirecting the AI, fixing misunderstandings, and patching problems. With a good specification, AI seems to gain common sense about how the task should be solved. The developer’s role shifts from constant course correction to focused code review.

The difference is dramatic. Large tasks without specifications require constant developer intervention. The same tasks with specifications let AI work autonomously, with developers verifying results rather than babysitting the process.

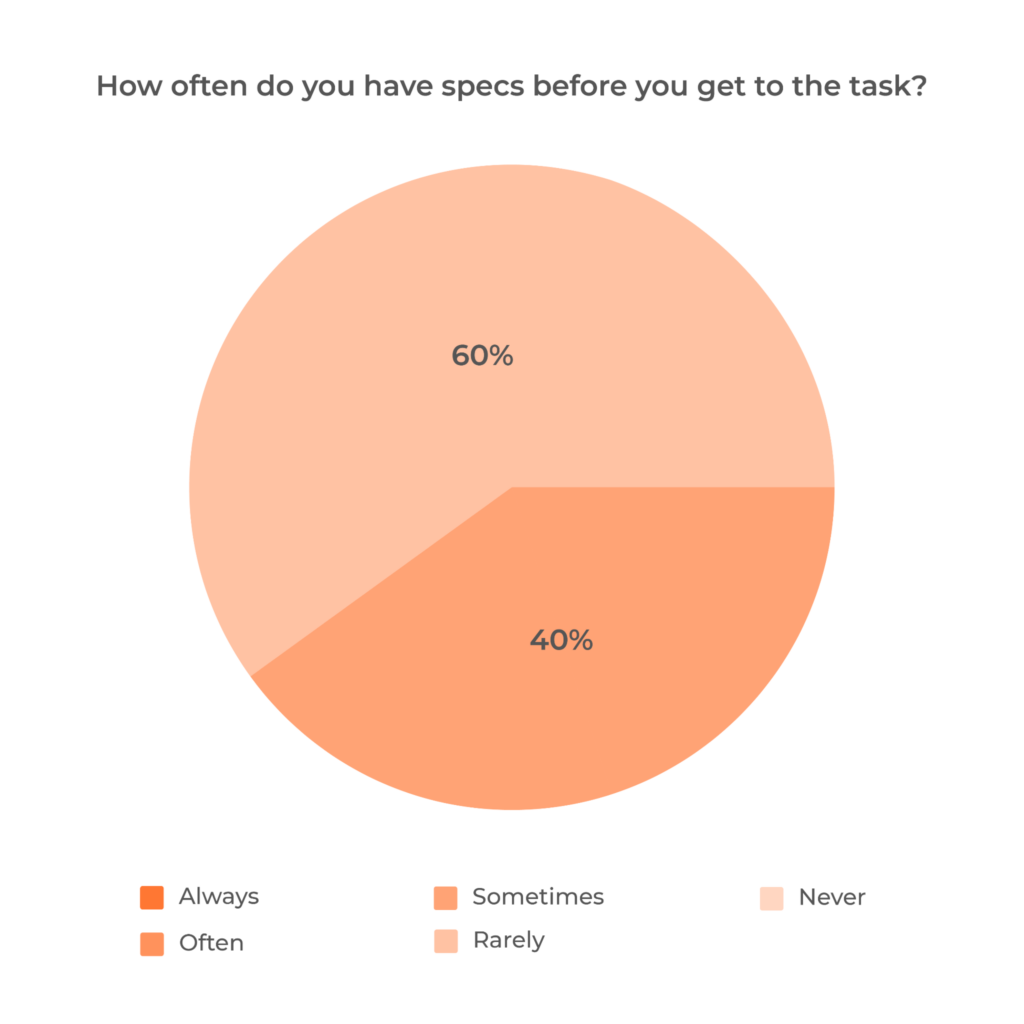

The current state across most teams: specifications appear rarely or inconsistently. When they exist, they’re usually formatted as Jira tasks, text documents, or oral agreements. Most teams lack standardized processes for specification work.

But adoption is happening organically. One developer described their evolution: it started because breaking development planning into phases felt more convenient. Later, they consciously began writing specifications for medium and large tasks regularly. This pattern is common – teams don’t adopt spec-driven development from methodology books. They adopt it because it solves real problems they’re facing with AI-assisted development.

All surveyed developers changed their approach to describing tasks since AI adoption. The shift is already happening. The question is whether to formalize it deliberately or let it happen haphazardly.

Spec-driven development isn’t just about writing docs first – it’s a step-by-step approach that helps teams build the right thing efficiently. Let’s break down how it works in practice.

The first step isn’t writing specifications – it’s understanding what you’re building and why. Start by establishing the bounded context: what does this system do, what doesn’t it do, and how does it interact with neighboring systems?

Most teams start with a domain expert’s mental model and a ticket with 3-5 lines of description. Your job is extracting that interpretation into a formalized technical solution. This means identifying not just the business processes that were explicitly requested but also the edge cases and scenarios that the business didn’t account for but are physically possible in your domain.

This phase is system thinking, not just requirements gathering. You’re mapping the territory before building anything.

The hardest part isn’t knowing what to include – it’s translating the mental model in stakeholders’ heads into a formalized specification.

Start by choosing the right format for your use case. REST APIs naturally fit OpenAPI. If you’re building high-performance distributed systems, Protocol Buffers and gRPC make sense. Event-driven architectures use Avro or AsyncAPI. Don’t fight the format – match it to your architecture.

The translation process is iterative. Your first specification draft will miss edge cases. Share it with the team. Walk through scenarios together. What happens when the external service is down? What if the user’s token expires mid-request? These questions reveal gaps in the initial spec.

Many teams find it helpful to work backwards from examples. Instead of starting with abstract schema definitions, write concrete examples of API requests/responses or event payloads. Then formalize those examples into the specification. This grounds the spec in reality rather than theory.

Don’t aim for perfection on the first pass. Record only what breaks the system when ambiguous. You’ll expand formalization as you encounter actual problems during implementation. Specifications that try to anticipate every future scenario often miss the real issues teams face.

Once your specification is formalized, tooling can generate significant portions of your implementation automatically. For an OpenAPI specification, you can generate:

In backend projects using deterministic code generation from specifications, implementation becomes complete and accurate. This eliminates entire categories of carelessness errors – typos, missing fields, and incorrect types. The specification is the contract, and generated code implements that contract exactly.

How do you know you’ve understood enough to start implementing? When you have formalized business process descriptions covering both the explicitly stated use cases and additional scenarios that, while not specified by the business, are possible within your domain.

At this point, you – and the AI – have a clear, detailed specification to work from. For example, a payment system spec should include standard transactions as well as edge cases like expired cards or network failures. Sometimes, quick prototyping helps verify these assumptions before full implementation.

Verification also means translating your understanding back to stakeholders and confirming alignment before implementation. Different teams use different verification approaches, but the principle is the same: validate understanding before coding.

When requirements change – and they will – you update the specification first. Then you regenerate or update implementation. The spec remains the source of truth throughout the project lifecycle.

Choosing the right standards and tools can make all the difference when working with specs. Here’s a look at what’s commonly used and why it matters.

The format you choose depends on what you’re building. REST APIs naturally fit OpenAPI. High-performance distributed systems benefit from Protocol Buffers and gRPC. Event-driven architectures use Avro or AsyncAPI.

The common thread: use established standards, not custom formats. The tooling ecosystem and community familiarity are worth any constraints the standard imposes.

For OpenAPI specifications, the openapi-generator toolchain can create server implementations, client libraries, and documentation from a single specification file. Protocol Buffers use the protoc compiler to generate code in multiple languages from .proto files.

These tools aren’t just conveniences – they’re the implementation of spec-driven development. Your specification becomes executable. Changes to the specification automatically propagate to implementation through regeneration.

Validation tools ensure your implementation matches your specification. OpenAPI validators can run in CI/CD pipelines, failing builds when code diverges from the contract. Contract testing frameworks like Pact verify that services communicate according to their specified contracts.

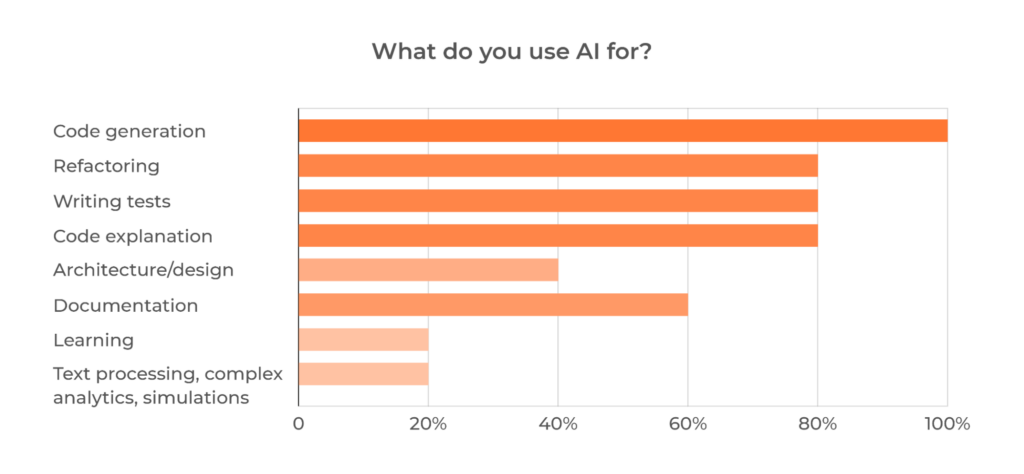

Developers at Setronica actively use multiple AI tools in their spec-driven workflows: GitHub Copilot, Claude, Gemini, ChatGPT, and specialized tools like Kiro. All of them work better with formal specifications than with vague prompts.

The practical workflow: describe the specification with AI assistance in a markdown file, breaking development planning into phases. This approach reduces AI mistakes significantly. The specification gives AI a structured target instead of requiring it to infer requirements from incomplete context.

From a specification, AI can generate complete endpoint implementations, automated test suites with edge cases, client SDKs in multiple languages, and interactive documentation. The specification becomes a multiplier for AI capabilities.

Developers use AI for code generation, refactoring, writing tests, code explanation, architecture design, and documentation. All these tasks benefit from formal specifications as starting points.

Not every task benefits from a formal spec. Knowing when to invest time in writing one – and when to move fast without it – can save headaches down the line.

Specification value increases with task complexity. The pattern is clear across all developers we interviewed: specifications make sense when coordination costs are high or when you need deterministic behavior.

Specifications aren’t always worth the overhead. The surveyed developers consistently identify scenarios where spec-first approaches slow down rather than accelerate development.

The threshold question: does the coordination cost exceed the specification cost? If you’re building something that multiple people or systems depend on, write the specification. If you’re making a quick fix that only affects one component, skip it.

Time for specification development is the most common complaint among developers. But experienced practitioners see it as an investment. The time cost is real, but it’s a matter of learning when the investment pays off.

Writing specs that actually help means focusing on clear, actionable details without overcomplicating things. This section covers what to include and what to avoid.

A good specification is easy to read and not overloaded. It describes the required technical stack, the task essence, and clear acceptance criteria. The specification should be broken into phases for implementation.

Acceptance criteria. Make requirements testable. Instead of “system should be fast,” write “API responses under 200ms at p95 for 1000 req/sec load.”

Here’s a practical example for OAuth2 authentication:

Feature: User authentication via OAuth2

Acceptance criteria:

Edge cases:

Technical stack:

This gives implementers (human or AI) everything needed without over-specification.

A bad specification is one you cannot implement due to contradictions, vague formulations, lack of boundaries, and missing negative scenarios. Worse than having no specification is having one that creates false confidence and hides problems.

Specifications slow down development when detail exceeds value. This happens when the specification isn’t properly formalized, when teams follow text rather than solving problems, or when specifications document internal implementation details that should remain flexible.

Red flags:

The solution: maintain accepted standards and keep specifications focused on contracts. Better to have incomplete but accurate specifications than exhaustive but contradictory ones.

Start by recording only what breaks the system when ambiguous, then expand the formalization level based on actual problems encountered. Don’t try to anticipate every detail upfront.

AI is reshaping how teams approach specs, with different workflows emerging depending on goals and risk tolerance. Here’s how Setronica teams are adapting.

Teams are using spec-driven development with AI in different ways, depending on their context and risk tolerance.

The enterprise approach. Use AI for initial domain analysis to identify pitfalls early. Write integration test scenarios with AI assistance. Employ AI for specific algorithm implementations. But maintain human control over architecture and verification. AI serves as an assistant, not an autonomous developer.

The workflow:

The rapid development approach. Use AI from the start. Describe specifications with AI assistance, breaking work into phases. Let AI autonomously implement modules. Switch between efficiency mode (AI-first) and learning mode (independent work with AI as reference) depending on context.

The workflow:

Both approaches rely on specifications to maintain quality. The difference is how much autonomy AI gets during implementation.

Developers write detailed descriptions for AI regularly. But there’s an important distinction between prompts and specifications.

A prompt is an operational instruction for immediate AI action. “Generate a REST endpoint for user registration with email validation.” It’s task-specific and temporary.

A specification is a long-lived contract. It’s versioned, multiple consumers depend on it, and it outlives individual implementation tasks. An OpenAPI specification for your user service is a specification – it defines contracts that frontend, mobile apps, and third-party integrations all depend on.

The confusion happens because specifications can be used to guide AI implementation. You can feed an OpenAPI spec to Claude and ask it to generate the implementation. In this context, the spec functions like an elaborated prompt. But the specification exists independently of that particular AI interaction.

Think of it this way:

The choice depends on whether you’re building something that needs to last and integrate with other systems (specification) or solving an immediate internal problem (prompt).

Without good specifications, AI makes predictable errors:

The pattern is consistent: AI development without specifications turns into chaos of continuous edits. Developers constantly redirect the AI, fix misunderstandings, and patch problems. With specifications, AI seems to understand the solution approach and requires only code review rather than constant supervision.

This reveals something important: specifications don’t prevent AI errors. They make errors detectable and recoverable.

When AI generates code from a specification, you can validate that code against the specification automatically. When AI generates code from vague prompts, you’re manually checking everything. The verification burden shifts from comprehensive manual review to automated contract validation plus focused code review.

The universal finding from our survey: AI result quality depends entirely on task description quality. Every developer rated this dependency at maximum. Poor description yields almost guaranteed poor results. Good specifications let AI complete tasks independently, with developers handling code review and verification.

Even with the best intentions, spec-driven development has its challenges. Here are some common bumps in the road – and how to smooth them out.

The most frequent complaint about specifications: they take time to develop. This is real overhead, not imagined. Writing good specifications requires thought, iteration, and validation with stakeholders.

The solution isn’t to skip specifications – it’s to approach them incrementally. Start by recording only what breaks the system when ambiguous. Expand formalization based on actual problems encountered, not hypothetical future needs.

Week 1: Specify only API contracts for critical endpoints

Week 2: Add failure scenarios after encountering first production issues

Week 3: Document edge cases discovered during testing

Ongoing: Expand based on real needs, not anticipated ones

Don’t try to anticipate every detail upfront. Specifications evolve as your understanding evolves. Experienced developers see specification time as investment – it’s a matter of learning when that investment pays off.

The second most common problem: irrelevance. Specifications get written, then code overtakes them. Small edits and changes don’t get reflected back into the original specification. Documentation becomes misleading instead of helpful.

The solution: treat specifications like code.

Version control specifications alongside implementation. Specs live in the same repository as code, not in separate documentation systems. Changes to specs trigger the same review process as code changes.

Code review for both spec and implementation changes. When someone changes behavior, the specification update is part of the same pull request. Reviewers check both.

Automated validation. Does the code match the current spec? OpenAPI validators in CI/CD pipelines fail builds when code diverges from contracts. Contract testing frameworks verify that services communicate according to specified contracts.

Make spec updates part of “done”. A feature isn’t complete until the specification reflects its current behavior. This includes edge cases and error handling discovered during implementation.

When your build pipeline fails if code doesn’t match specifications, drift becomes immediately visible instead of slowly accumulating.

What’s worse: a bad specification or no specification at all?

A bad specification with contradictions is worse than no specification. It creates false confidence and hides problems. Teams think they have alignment when they actually have conflicting interpretations embedded in a formal document.

Think of it like code: good code that doesn’t work is easier to fix than bad code that passes tests. Non-working but well-structured code shows where the bug is. Bad code that happens to work is a maintenance nightmare.

The resolution:

If you can’t write a clear, consistent specification, your understanding of the problem is insufficient. Keep clarifying with stakeholders before implementing. A vague specification isn’t a time-saver – it’s technical debt that compounds during implementation.

The guideline: better to have a minimal but correct specification than a comprehensive but contradictory one. Start small, expand based on actual needs, and maintain internal consistency above all.

The developers we talked to didn’t set out to adopt spec-driven development because of methodology trends. They adopted it because AI-assisted development forced the issue. Without clear specifications, AI generates code that requires constant correction. With specifications, AI becomes a reliable implementation tool.

At Setronica, we’re formalizing spec-driven development as our standard approach. Not because it’s theoretically better, but because it’s what AI reality requires. Our developers already write detailed task descriptions for AI regularly. We’re choosing to standardize that practice and give teams the right tools and formats.

This isn’t a complete methodology overhaul. Most of our teams are already working this way informally – breaking tasks into phases, documenting requirements more carefully, and validating contracts before implementation. We’re making it explicit and consistent across projects.

The shift is already happening across the industry. Code generation is fast. Problem formulation is slow. That imbalance makes specification writing the high-leverage skill. The developers who master clear specification writing today position themselves correctly for AI-augmented development that’s already here.

For teams starting out: choose one medium-complexity feature. Write the specification before implementation. Feed it to your AI assistant. Compare the result quality against your traditional workflow. The difference will be clear.

Specifications aren’t overhead. In the AI era, they’re how you multiply your team’s effectiveness.

✍️ Want to discuss how spec-driven development can work for your projects? Contact Setronica to talk with our team about implementing this approach in your organization.